Can GenAI tools be bicycles rather than mobility scooters?

30 May 2025

Keeping human intent and mastery at the center

Generative AI use is going to increase, whether we like it or not. My 2 main activities are programming and art, which have been the most affected by this revolution.

(the past) The computer: a bicycle for the mind

The metaphor of the computer as a bicycle for the mind is attributed to Steve Jobs.

If you want to draw, the computer gives you a canvas. It does not draw anything for you, but offers many conveniences:

- quick editing; move elements easily, rather than re-draw everything

- easy checks on outline (thumbnail or value checks in black and white)

- layers; keep elements separated depending on what they are

- …many more

If you want to write, the computer gives you a text processor. It does not write anything for you, but offers many conveniences:

- quick editing; move text easily, rather than re-write everything

- Dynamic outline

- Bookmarks, search

- Spelling, syntax checking

- …many more

These are tools that help you realize your ideas, and increase the quality of your work. They expand your mind in ways that are not possible without the computer, as they greatly reduce the cost of iteration. This accelerates learning and makes great work reachable to a much larger pool of creative people.

A bicycle leverages the power of your legs. You steer it and decide where to go. Yet, it is important to note that running and biking are separate activities, and it follows that their resulting outputs are not exactly the same. In art, the brushwork of digital art is generally ugly compared to traditional art. One may feel this is an unfair comparison, as the human brain naturally finds natural phenomena aesthetically pleasing, and the diffusion of paint on canvas activates these pathways.

These problems tend to be more obvious at the introduction of a new tool, whether it is digital art, 3D, or even going back to new pigments or paint tubes. This also explains why there might be a pushback against it, even devoid of any ideological bias.

(the present) GenAI as a GPS-guided mobility scooter

A mobility scooter is not a tool that accentuates the power of your legs; it is something that lets you not use them at all. Instead of building strength, it causes atrophy. It can help when knowingly used as a temporary crutch, but only worsens problems in the long run.

The basic use of GenAI implies an inversion of control

Tools are generally powerful because they give you full control, including the ability to do ‘bad’ things. In music, a common trick is to restrict the notes to the pentatonic scale. This way, you can play anything and the results will sound pleasant, a far cry from what happens by hitting random keys on a regular piano. In painting, reducing the color palette (with the extreme being to only have black and white) is the easiest way to avoid ugly results. Of course, these tricks reduce what can be expressed. If you don’t master the craft, the easiest is to engage with the minimum number of dimensions.

A description (a prompt) extremely underspecifies what an image may look like.

In AI terms, going from prompt to image is given by a certain trajectory through the latent space.

In principle, the vast majority of these paths lead to something ugly.

GenAI is marketed as a tool for regular users and not masters.

As a result, there’s an incentive to prune the trajectories to only keep the ones that are considered beautiful.

On a foundational level, modifying features such as the value space or how color transitions are handled (which is generally the most reliable tell of AI images) is not built into these systems – such controls are tacked on afterwards.

In short, it is easy to generate an aesthetically pleasing image. It is excruciatingly hard to generate an image corresponding to a very specific idea. For a normal user, this problem already exists: communicating with an artist is difficult, especially because the idea in the head of the person who asks might not make sense. But for an artist, this is not even a tool.

Not all GenAI tools are equal; code is the outlier, as it is inherently an editable format with no translation layer. The equivalent of what systems produce for music and images would be if they directly output assembly at best, or just binary. There are issues with this, as music would require a whole pipeline.

cycology: the Illusion of explanatory depth in bicycles

The illusion of explanatory depth is a cognitive bias where people believe they understand a topic better than they actually do. The effect was observed in what is called explanatory knowledge, i.e. “knowledge that involves complex causal patterns”. The illusion is similar to the Dunning-Kruger effect, but the latter focuses on ability.

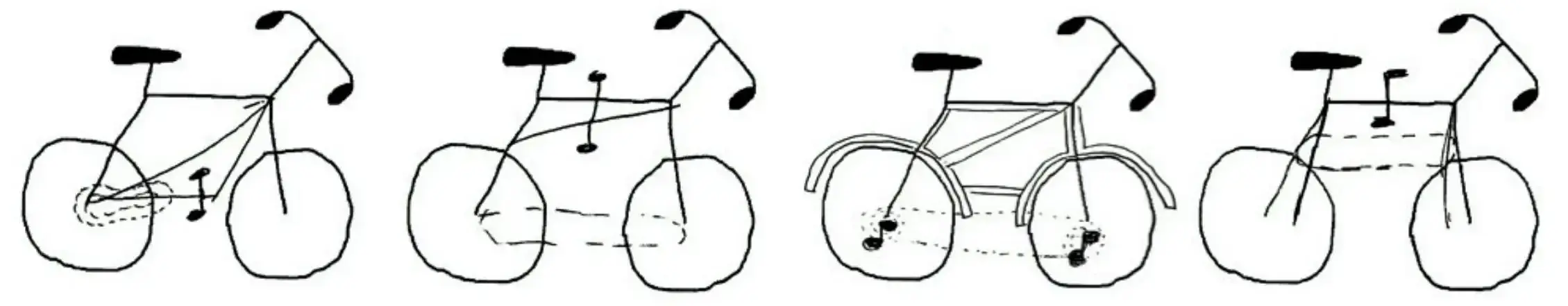

It was made popular in a 2006 study, The science of cycology: failures to understand how everyday objects work. It showed that people tend to overestimate their ability to explain how everyday objects work; the diagrams are quite striking.

As an artist, such illusions are often encountered head first. Trying to draw the object from memory would quickly make the knowledge gap obvious; yet you need to actually rack your brain first before jumping to photo references.

If you don’t try to go beyond surface level, there is no way to escape it. GenAI tools make it easy to avoid building in-depth expertise, compounding the effect.

(the future) A positive direction: an open question

Beyond the creative concerns, GenAI has broader consequences, and everything ties into the original question.

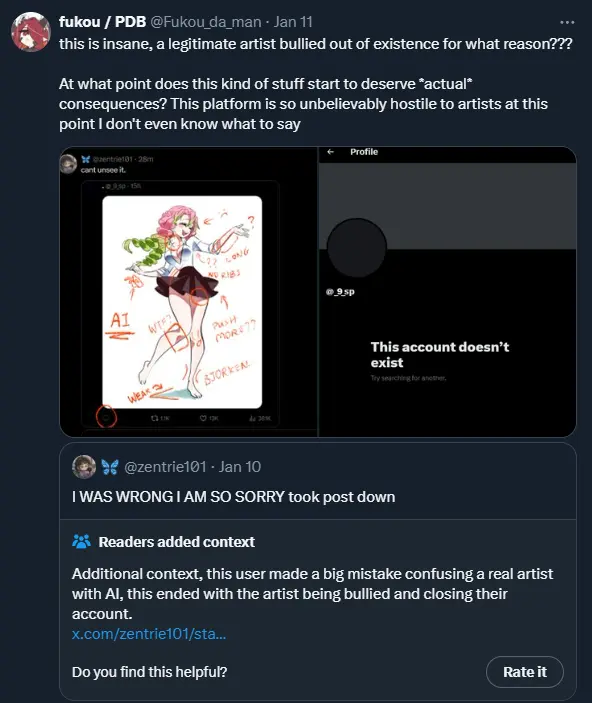

Free spam for everyone, everywhere

The cost of spam is reduced to zero whereas the cost of spam detection is incredible. This is true for music, images, text. It results in paranoia and witch hunts.

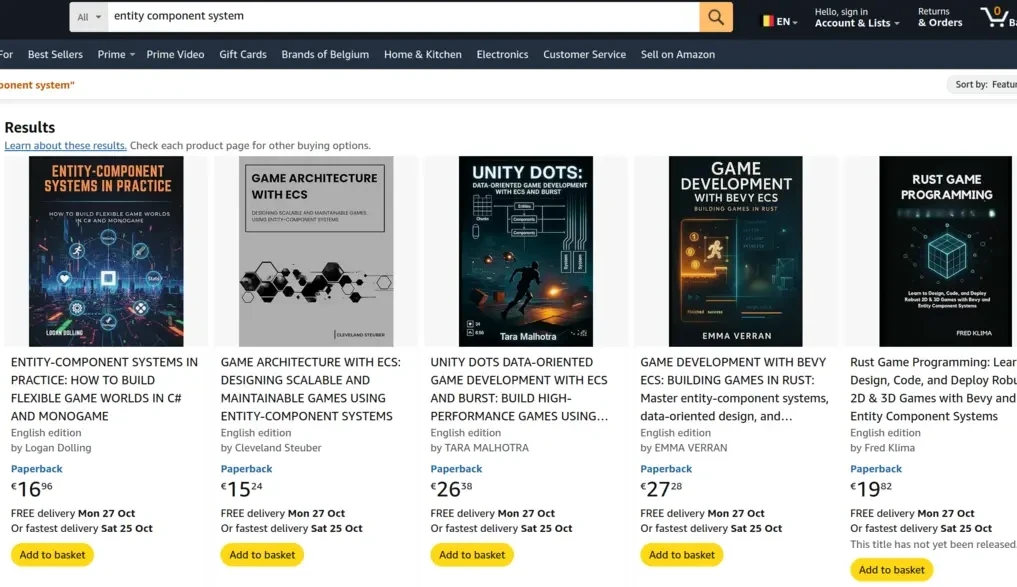

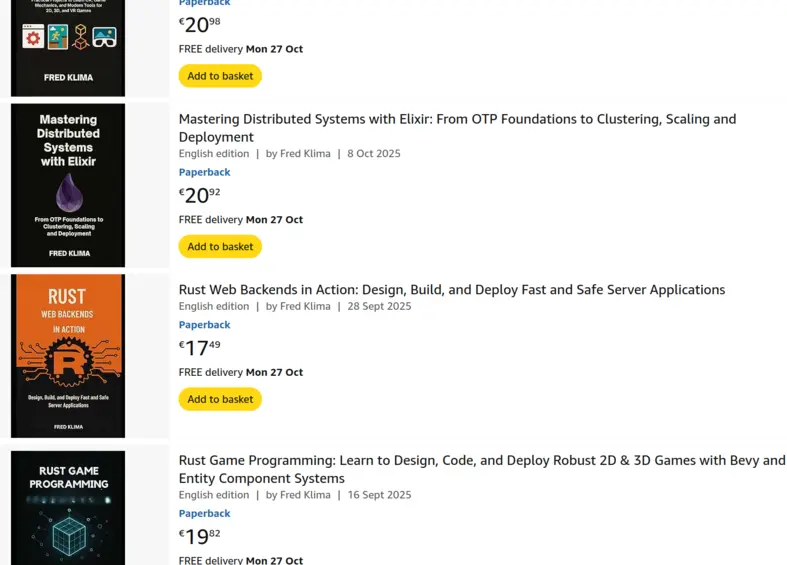

It also results in massive spam in digital places; these are results on Amazon on a simple technical query. All top results are AI generated ‘books’.

These authors are able to generate about one highly technical book per week. Honestly, these are rookie numbers, why not add 12000 blog posts in a single commit?

It is unclear how this battle will unfold, but the most likely outcome is more pollution, but also more invasive scrutiny.

Learning

LLMs can be used not as shortcuts but as teachers, providing feedback and analysis of surprising quality. Research suggests that most people who use them use them the wrong way. To paraphrase, excessive reliance on Generative AI can lead to cognitive atrophy, characterized by reduced memory, concentration, and analytical skills. (How AI Impacts Skill Formation)

While there are many studies suggesting similar conclusions, to the best of my knowledge none show positive effects. This suggests positive effects may be very localized to specific individuals, and/or offset by the negatives.

New systems tailored to learning may be able to address this, and there is already some work in this direction, most notably by Andrej Karpathy; the financial incentives are well aligned for this use case.

Future work

At their heart, generative AI tools are probabilistic models. It is very hard to improve on some of their limitations, as even access to a tool giving the right answer to a problem might be ignored. For programming, this means giving access to theorem provers such as Lean or Coq to the LLM to circumvent the issue.

However, the tremendous rate of progress means there are new tools and new opportunities. So even very niche tools targeting experts might become financially viable. This includes needing less data to train specific tasks, and there are already companies working on this, although it is still “enterprise”.

PS: I have been sitting on this one for about a year. The problem is more important than ever, and still as difficult as it was.

PPS: A number of unfinished drafts of accompanying posts will be published soon.